Climate

Started on Jan. 5, 2022

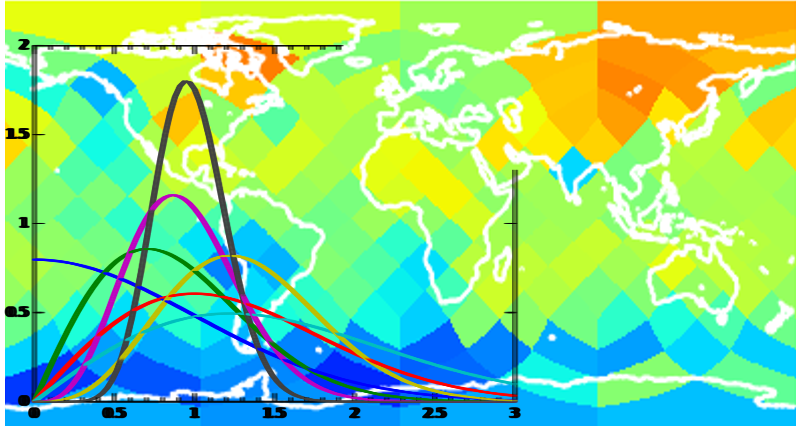

The prediction of temperature anomalies on interannual timescales (1 or 5 years) is one of the most challenging topics in climate science. Recently, a study has shown that local anomalies in the earth's temperature have a certain predictability 1 to 5 years in the future, even while temperatures are average at a global scale. The aim of this study is to demonstrate this by predicting temperature anomalies at a regional scale.

In climate science, because of the inherent chaotic nature of the studied system, predictions have to be made in a probabilistic framework. Indeed, for risk assessment and early mitigation, the likelihood of extreme events is potentially as important as the knowledge of the expected event. Hence, a skillful prediction has to be accurate (minimal prediction error) and reliable (good sampling of prediction spread).

Another challenge of climate science is the lack or the limited amount of data to train any prediction system. Thus, this challenge aims to find an algorithm that predicts the temperature anomalies of the next years using the past 10 years of data and to provide a probabilistic prediction, or at least the expected prediction and its uncertainty (within a Gaussian assumption).

Ref: Sévellec, F., & Drijfhout, S. S. (2018). A novel probabilistic forecast system predicting anomalously warm 2018-2022 reinforcing the long-term global warming trend. Nature Communications, 9(1), [3024]. DOI: 10.1038/s41467-018-05442-8

How accurately can we predict regional temperature anomalies based on past and neighbouring climate observations ?

The input data consists in 7 data sets of surface temperature anomalies all over the world. Each data set is composed of 10 years of data from 22 climate model realizations for 3072 points scattered all over the world. The target data consists of average temperature anomaly values for 192 positions in the next 5 years. Considering the Healpix nested ordering property the downgrading from 3072 points to 192 points is given by the mean of each 16 consecutive values (e.g in python x_down = numpy.mean(x.reshape(192,16),1)).

The two files train_X.csv and test_X.csv with respectively 5 and 2 data sets have the same format with 6 columns:

DATASET: the dataset id. There are several independent data sets per file. Each dataset is composed of:

MODEL: model id (1-22) for models and (0) or the actual observation

The two files train_Y.csv and test_Y contain temperature anomaly observations for the same 5 and 2 data sets as in the X files. They have 5 columns:

The random_bench.csv file is an example of a candidate file. It should contain the predicted mean temperature values and the corresponding variance for 192 regions for each data set. This file should have the same 5 columns as the Y files:

Two other python software files should help to understand the challenge:

climate_example.py provides an example of prediction using the last known value for the mean and the 22 model distribution for the variance.

show_model.py shows the 10 year map and the corresponding prediction for one model. This python script uses the healpy package (https://healpy.readthedocs.io/en/latest/) to describe the earth coordinates. The script is inside the metric_plot.pdf. NB: Healpy is currently not supported on Windows.

To estimate the validity of the predictions we propose to use two different measures: the coefficient of determination (R2), which shows the skill of the mean prediction; and the reliability, which measures the accuracy of the spread in the prediction. The metric thus characterizes the accuracy using the mean and the consistency of the predicted error with the effective one. You can check out the climate_metric.py file for details.

The benchmark for this challenge consists in the mean and variance of the 22 model predictions (rows where TIME = 10). This simple benchmark achieves a score of -0.23 on the test data.

Files are accessible when logged in and registered to the challenge